Hugh McGuire, the LibriVox dude, has started a new project, datalibre.ca, whose tagline is: urging governments to make data about canada and canadians free and accessible to citizens. He wanted to know more about why I’ve been focusing lately on the issue of access to public data, so he emailed me some questions and has now posted the answers here.

Category: .

A conversation with Lewis Shepherd about social software in the intelligence community

As senior technical officer for the Defense Intelligence Agency and chief of its requirements and research group, Lewis Shepherd has promoted and observed a remarkable transformation that’s occurring inside the U.S. intelligence community as analysts begin to embrace Web 2.0 practices. When we first met in March I jumped at the chance to quiz him about how Intellipedia, blogs, and related methods are taking root in the various agencies. In this week’s ITConversations podcast we replay that conversation.

The Intellipedia story is fairly well known, but in this podcast you’ll also hear about the viral adoption of blogging among analysts. It’s surprising in one way because as Lewis Shepherd points out, you wouldn’t expect a senior analyst who has built up a reputation as the reigning expert on some topic to be thrilled about having a junior analyst comment on — or edit! — the work. But on the other hand, he notes that this is essentially a scholarly community and the urge to publish, and to be cited, is strong. It’s a fascinating tale of culture change.

In addition to social software, we also discussed a range of initiatives in the realms of virtualization, service-oriented architecture, and the semantic Web.

Waiting for my air taxi

While traveling to Snowmass Village, Colorado today for the EDUCAUSE Seminars in Academic Computing, I listened to a pair of podcasts: Steward Brand at PopTech and Esther Dyson at ITConversations. As often happens, I thought of questions I’d like to ask, and if I can bring those two onto my own show sometime I’ll do just that. In this particular case, I’d love to have a conversation at the intersection of the topics discussed on those podcasts. Stewart Brand’s topic was world urbanization. It’s a major theme for him lately, and has been the subject of several of the Long Now talks — including his own on cities and time, and Robert Neuwirth’s on the nature and dynamics of squatter cities. We’re becoming an urban planet, Brand says. The crossover moment, when more than half of humanity lives in the cities, may already have occurred, or else soon will. He thinks we’ll go far beyond it in this century, becoming a mostly urban world.

Of course Stewart Brand has been wrong before, as he freely admits. Decades ago he worried about the population explosion. But while he’s astonished by the doubling that’s occurred in his lifetime, he’s even more astonished to think that it was probably the last doubling, and that after leveling out at between 7 and 9 billion the world population is expected to sharply decline.

Could he be wrong again? Could humanity’s rush to the cities slow down or even reverse? Since the concentration of economic opportunity in cities is what brings people there, it would take a dispersal of economic opportunity to enable those who would prefer the countryside to remain there.

One powerful force that’s dispersing economic opportunity is of course the Interent. A decade ago there were a few lucky souls who could pull an income through a modem. Today there are lots more, and we’ve yet to see what may happen once high-bandwidth telepresence finally gets going.

But a second force for dispersion has yet to kick in at all. It is the Internetization of transportation — and specifically, of air travel. That’s where Esther Dyson comes in. She’s investing in several of the companies that are aiming to reinvent air travel in the ways described by James Fallows in his seminal book on this topic, Free Flight. In that vision of a possible future, a fleet of air taxis takes small groups of passengers directly from point to point, bypassing the dozen or so congested hubs and reactivating the thousands of small airports — some near big cities, many elsewhere.

There are two key technological enablers. First a new fleet of small planes that are lighter, faster, smarter, safer, and more fuel-efficient than the current fleet of general aviation craft with their decades-old designs.

The second enabler is the Internet’s ability to make demand visible, and to aggregate that demand. So, for example, I’m traveling today from Keene, NH to Aspen, CO. If there are a handful of fellow travelers wanting to go between those two endpoints — or between, say, 40-mile-radius circles surrounding them, which circles might contain several small airports — we’d use the Internet to rendezvous with one another and with an air taxi.

For me that could be a huge win. There’s an airport not much more than a mile from my house with a runway that can land Air Force One, and in political seasons sometimes does. Years ago we had commercial air service to Boston and New York thanks to a federal essential air service subsidy, but that wasn’t enough to keep the operation going and now it’s gone. So my day looks like this:

1. Drive to Boston’s Logan airport: 2 hours. I can sometimes fly from Manchester, NH, which is only an hour and a quarter, but almost never directly to anywhere. And since today already involves an unavoidable hub — Denver — I’m avoiding a second by going directly there.

2. Logan’s economy lot to the United terminal: 20 minutes. It can be worse, but today the bus was there waiting and left quickly.

3. Clear security and wait: 1.5 hours.

4. Logan to Denver: 4 hours.

5. Layover in Denver: 2 hours.

6. Denver to Aspen: 1 hour.

7. Cab to Snowmass: 30 minutes.

The hypothetical air taxi scenario looks like this:

1. Drive to Keene airport: 6 minutes.

2. Clear security and wait: 30 minutes.

3. Passenger pickup in Amherst, MA: 30 minues.

4. Passenger pickup in Albany, NY: 30 minutes.

5. Albany to Aspen: 7 hours.

Cab to Snowmass: 30 minutes.

Let’s compare the two scenarios:

| conventional | air taxi | difference | |

| Drive to airport | 2 | 0.1 | (1.90) |

| Shuttle bus | 0.33 | 0 | (0.33) |

| Security and waiting | 1.5 | 0.5 | (1.00) |

| Passenger pickup | 0 | 0.5 | 0.50 |

| Passenger pickup | 0 | 0.5 | 0.50 |

| Main flight | 4 | 7 | 3.00 |

| Layover | 2 | 0 | (2.00) |

| Secondary flight | 1 | 0 | (1.00) |

| Cab | 0.5 | 0.5 | 0.00 |

| 11.33 | 9.1 | 2.23 |

According to this back-of-the-envelope calculation, the air taxi scenario isn’t a huge win. It only shaves a couple of hours off the trip, and we haven’t even considered how the prices of the two scenarios will compare.

But if I put on my Clayton Christensen hat and look at this from the perspective of disruptive technology, it seems that the positive values in the difference column are much less fungible than the negative values. In the conventional scenario, I don’t expect any significant reduction in the time it takes to get to, or through, hub airports. In the air taxi scenario, however, I can imagine significant reduction on two fronts. If this model starts to succeed, there will be more aggregatable demand and thus fewer required multi-hop passenger pickups. And there will also be more incentive to make smaller planes fly faster. As with other disruptive technologies the air taxi system at first underperforms the incumbent system, but has lots of headroom for improvement.

I have no idea if this will come to pass, or if I’ll live long enough to personally benefit from it. But it’s something I always think about on trips like this one. Looking out the window of the plane I can see a big world down there, full of many beautiful places that are mostly empty and — if Stewart Brand is right — are only going to get emptier.

Maybe it’s true that, given a choice and all other things being equal, most people would prefer to live in settlements of millions to tens of millions rather than tens of thousands to hundreds of thousands. But things aren’t equal, and while the quality of life I enjoy living in a settlement of tens of thousands is special in many ways, I pay a price for it. On travel days like today, I’m reminded that dealing with hub-and-spoke air travel is a fairly significant part of that price.

I don’t doubt that the world will continue to urbanize but I do wonder about the architecture of the emerging network of cities. Will the growth of megacities preclude the growth of towns and small cities, or can we flourish across this range of scales? The Internet is already enabling the latter in ways that I don’t think have yet been factored in to the predictions about world urbanization. Add to that the Internetization of air travel and things just might turn out rather differently than predicted.

PS: After writing this enroute, my Denver to Aspen hop was cancelled due to thunderstorms. So it took another four hours to rent a car and drive 150 miles. On the bright side, six of us shared a minivan, and it was a wonderful group: a brain surgeon, a CTO, a psychologist, an artist, and a telecommunications and real estate entrepeneur. We talked the whole time in a way that rarely happens on planes but that, it struck to me, might be more likely to happen in the more intimate cabin of an air taxi.

Old tunes, new opportunities

I play a bit of fingerstyle guitar as a hobby, and a while ago I found a nice arrangement of The Tennessee Waltz which I’ve been trying to learn. The other day I went to YouTube to check out some other arrangements. Wow. There’s a smoking version by Bonnie Raitt and Norah Jones, a classic Patti Page version, a jazzy version by Kirk Whalum, a soul rendition by Otis Redding, this sweet one by Dan Hardin, and a bunch more. It’s astonishing to be able to sample all these different arrangements. It’s also, very likely, an anomaly. What happened to Napster, and what’s happening to Internet radio, will very likely happen here too.

We can argue until the cows come home about fair use and the appropriate scope of copyright, but the current regime has serious momentum, and significant change could be a long way off.

Meanwhile, a profound new kind of collaboration — enabled by Internet video — is trying to emerge. Sure, you can use Internet video to share cute animal movies1, but you can also use it to share knowledge about lawnmower maintenance or — as Lucas Gonze notes here — guitar playing.

In that post, Lucas reacts to an NPR story about the YouTube takedown of video guitar lessons. And he writes:

When a music publisher prevents musicians from learning a song, they are destroying the value of the song. There’s no reason to learn the Smoke on the Water riff except that everybody else knows it, and cultural ubiquity isn’t possible unless learning is absolutely free and unencumbered. Notice that the song in the original quote is by the Rolling Stones, a band that couldn’t matter less if it weren’t part of pop culture canon.

One result of copyright extremism will be the disappearance of cultural icons like the Rolling Stones. They haven’t contributed anything fresh to the culture for close to forty years, and without third parties reusing their old work in ways that make it fresh they hardly exist. In terms of 2007 pop culture, all those covers of “Paint it black” *are* “Paint it black.”

This is why I am resurrecting 150-year old songs and posting them, along with sheet music, on my blog — it’s possible for those songs to be used as source material for new work.

Although most of my friends disagree with the premise that out-of-copyright material can interest modern audiences, Lucas had me at hello with this project. That’s probably because, though I wouldn’t have thought Episcopal hymns would be toe-tappers, I love to hear — and play — John Fahey’s arrangements of tunes like In Christ There Is No East Or West.

Now I learned that one from a book, so the arrangement is copyrighted by the publisher, although the tune itself is available for reuse. But as I watched and listened to all those different versions of The Tennessee Waltz, I couldn’t help but wonder what might happen if that dynamic were applied to out-of-copyright tunes. Can more of the old tunes be reborn? If so, will our new ability to share, teach, and learn turbocharge the creative process surrounding them? If so, will that process in turn lead to the production of new tunes? If so, will some of those new tunes achieve cultural ubiquity? If so, will some of those conceivably remain outside the copyright regime?

That’s a whole lot of ifs and, as I said, most of my friends think none of this will ever happen. As for me, I dunno. Maybe yes, maybe no. Either way, props to Lucas for having the vision and taking the shot.

1 At 41000 views and climbing steadily, I sometimes worry in dark moments that, when all is said and done, I’ll be known as “the guy who made that cute video with the kittens and the bunnies.”

The police station effect

Thanks to some really great comments on yesterday’s item I’ve taken another pass through the spreadsheet I got from the police department1. It looks like Chris Anderson and David French were exactly right to suggest a “police station effect” — namely, that there’s more crime at or near the police station.

Here’s a version of yesterday’s chart (with cleaner underlying data):

It’s focused on the old location of the police station which, you may recall, moved from Central Square in Jan 2006. If you thought the presence of the station would suppress the number of incidents, you wouldn’t find evidence for that here.

Now here’s the same thing focused on the new location of the police station:

That’s pretty clear!

There were two causes suggested.

1 (Chris): “The station was the place of the crime report and there was often no specific address.”

Yup. Of the 341 incidents within .1 mile of the new station, 315 were at the exact address.

2 (David): “This is where you end up when they let you out of the drunk tank.”

It’s possible to explore that spillover effect, but I’ll stop here and call out another excellent comment from Doug Finner:

If you get a big pile-o-data and don’t know everything about how the data was collected, it can be pretty close to impossible to do anything other than make very general observations. Trying to draw conclusions from data that is likely ‘dirty’ is often a fools errand. Probably the best you can do, is find interesting trends and then try and get good clean data collected – the whole scientific method thing.

Indeed. For this round I took a much more critical look at the address data. I discarded the fair number of junk addresses that resolved erroneously to the city center. And because the addresses in the file didn’t specify “St” or “Rd” there were systematic problems — particularly in the case of Marlboro which was resolving to Rd rather than St.

As Doug Finner suggests, it would be wise at this point to hand back the file augmented not only with latitude/longitude coordinates, but also with indications of how clean or dirty the geocoding was, and recommendations on how to improve it.

Meanwhile, the toolsmith in me is getting fired up with all kinds of ideas. For example, when I processed the raw file to create this categorized stack graph I wound up creating an ad-hoc system of piped filters in Python. Each one takes a list of rows and returns a transformed list of rows. Here are some of them:

- removeIncidentnums

- dedupeCasenums

- adjustDates

- trimDescs

- removeSingletonDescs

- addCategories

- addMonthlyCounts

All well and good. But this just begs for some kind of social treatment a la Pipes or Popfly, with a particular focus on the transformation of rectangular datasets.

I’m also thinking about ways to meld Python and Excel together more closely. So far, I’ve only relied on code generation — that is, using Python to write VBA macros to, for example, define named ranges. There’s also the possibility of outside-in automation, where Python drives Excel through its automation interface. But then I got to wondering: Will there be a role for IronPython (or IronRuby) here, someday, such that you could use these languages inside Excel? That’d be very cool.

1 Yes, I will publish this data once I’ve had a chance to show my work to the police and get their approval.

A geographic analysis of local crime data

If you’ve been following the continuing saga of my exploration of local crime statistics [1, 2, 3, 4], here’s an update. The police department has provided a spreadsheet containing a complete dump of reported crimes back to 2002, including the location (address) information I was looking for.

This dataset includes about 15000 rows, which is far too many to show on a map without some fancy filtering. But while pondering what to do about that, I realized I could try to answer two questions that folks have been asking:

1. Is there more crime in the past few years?

2. If so, is the increase localized to the downtown area?

The second question arises because the police department relocated, in 2006, from the center of town to a peripheral address. It’s been suggested that there is, as a result, less of a police presence downtown, and thus more crime.

The answer to the first question appears to be yes. As Martin Wattenberg observes, in his comments on that visualization, there’s a striking seasonal pattern: strong dips in winter, weak dips in summer. He asks:

Is this weather-related (potential criminals thinking “It’s too cold to mug anyone” in January)? Are there population changes in Keene, like tourism or college students, that would cause this?

I think he’s right on both counts. It’s cold here in winter, and it’s a college town.

More broadly though, the 2006 peak is noticeably higher than prior years’ peaks, and though we’re only in the middle of 2007, it’s tracking the 2006 pattern. Clearly crime is up since 2006.

But does the likelikehood of downtown crime correlate with the relocation of the police department? According to this chart, there is — if anything — a reverse correlation:

Here’s how I made that chart:

1. Geocode the addresses to latitude/longitude locations.

2. Compute the distance of each location from the town center.

3. Group the locations into zones.

4. Chart the percent of crimes in each zone.

I’ll reflect in a separate entry on the nature of that process, and on ways it could be made more accessible to the less technically inclined. But if this result proves to be a valid, it’s a nice example of citizen use of public data. And of course if someone else’s analysis of the data (and of my methods) were to challenge my result and prove something different, that would be even better!

Can social tagging improve email?

I’ve had a brief fling with Taglocity, an Outlook add-in for tagging email, contacts, and tasks. You can of course already tag messages in Outlook using categories, and I do, but rarely, just as I’ve rarely used labels in Gmail. For me at least, tagging is most interesting and useful when it is social.

Consider, for example, the recent interaction around the publicdata tag in del.icio.us. I’m continuing to see new items show up in the global bucket, /tag/publicdata. Occasionally I add one of these to the list I’m curating in my private bucket, /judell/publicdata. There, I can see at a glance which of the items I’ve collected are of interest to others, and to how many others. Focusing on the Bureau of Justice crime data I’ve been using lately, I can see who else tagged it, I can observe the historical interest in that URL back to 2004, and I can notice that it was most recently tagged by somebody at Many Eyes. I can then compare the list that’s being curated by Many Eyes to the list that I’m curating.

So here’s the question: Can these effects occur in email? In theory they can, and Taglocity lays the foundation with a feature called traveling tags. This is actually an idea that I discussed long ago in my book Practical Internet Groupware, where I suggested that keywords could be passed in SMTP headers, or in XML packets carried in message bodies. From the Taglocity FAQ:

Taglocity has a number of ways of transferring the tags you have assigned to an outgoing message to the recipient. These include using the SMTP Keyword header and something called a ‘Tagline’. A Tagline is a footer that includes the text ‘Tags:’. The Tagline is very simple but will work on any device and mail relay, as it is placed within the message content.

In my experiments I didn’t find any evidence of the SMTP method, but maybe that got stripped out by an intermediate relay. The tagline wouldn’t be the nicest thing to have to parse mechanically:

<p class=3DMsoNormal><span style=3D'font-size:8.0pt;font-family:"Arial","sa= ns-serif"; color:#8C8C8C'><a href=3D"http://www.Taglocity.com">Taglocity</a> Tags: tag= ging, socialsoftware</span><span style=3D'font-size:12.0pt;font-family:"Times New= Roman","serif"'><o:p></o:p></span></p>

But it’s doable, and there may be an option for including a more well-structured packet.

These are just technical details though. The real question is: What kind of tag-related social dynamics are even possible in email? I guess that’s something you could only find out by trading tags with other people for a while.

In an internal email thread on this topic, one person noted:

I wonder if there’s both a public tagspace and a private tagspace? What if I want to tag a thread as “readlater” or “notmyproblem”… does everyone have to suffer my personal categorization?

I investigated, and found that there is a notion of public and private tagging. It works on a per-tag basis, though, unlike del.icio.us’ per-item privacy, so apparently you’d need to develop a private vocabulary that included no tags you’d want to use in public.

In an another message on that internal thread, someone noted the obvious problem of critical mass. The social effects in del.icio.us are a function of scale. Is departmental or even corporate scale sufficient to sustain those effects? Even when it is, as I’ve heard is true for IBM’s internal use of Dogear, can the social effects of that kind of web application be usefully translated to the email environment?

I don’t know the answer, but I’d love to hear from folks who are actively using Taglocity (or equivalents, if they exist) about what works or doesn’t, and why or why not.

Chris Gemignani recreates a New York Times infographic in Excel

When I read this story about cancer care in the Sunday New York Times yesterday, I was struck by one particular information graphic which I thought was very nicely done:

It turns out that Chris Gemignani was impressed too, and he decided to recreate the image using Excel. Here’s what he came up with:

Going one huge step further, and in the spirit of today’s theme of narrating the work, he created a screencast in which he demonstrates the process of making that graphic. It’s a wonderful example of the dynamic I’ve been describing. One of the commenters on Chris’ blog thanks him for teaching him some helpful techniques. Another suggests a technique that Chris hadn’t used but thinks is interesting. Very cool!

With Excel, as with all software — on the desktop and on the web — there’s so much untapped potential. The obstacles are knowing what’s even possible, and then knowing how to achieve it. Screencasts like this one leap over both obstacles in a single bound.

A conversation with Moira Gunn about BioTech Nation

I’ve listened to many of Moira Gunn’s Tech Nation podcasts, so it was a treat to turn the tables and interview her for this week’s episode of my show. Recently she’s been devoting a lot of attention to the world of biotechnology. There’s a new show focused exclusively on that subject, BioTech Nation, and in March she published a book about the show: Welcome to BioTech Nation: My Unexpected Odyssey into the Land of Small Molecules, Lean Genes, and Big Ideas.

In this conversation we discuss what it’s like for a computer scientist and engineer to venture into the world of biotechnology, why the decade of biotech may finally have arrived, what makes biotech entrepeneurs special, and how we can use Internet media to enlarge the public understanding of science and technology.

On that last point, Moira echoed comments that I’ve also heard recently from Hugh McGuire and Timo Hannay, both of whom told me they listen voraciously to science-oriented podcasts. They all agree that hearing scientists narrate their own work, in their own words, is a wonderful new opportunity, and a great way to promote more and better public understanding.

It’s also interesting to hear Moira’s take on podcasting. Comparing her long experience with large terrestrial and satellite radio audiences to her recent experience with a smaller Internet radio audience, she says: “The quality of the listener you get on the Internet is far better.”

Beautiful code, expert minds

Last year Greg Wilson wrote to tell me about the collection of essays that he and Andy Oram were compiling into what has now become the book Beautiful Code: Leading Programmers Explain How They Think:

The idea is to get a bunch of well-known and not-yet-well-known programmers to select medium-sized pieces of code (100-200 lines) that they think are particularly elegant, and spend 2500 words or so explaining why.

The 600-page tome arrived recently, and as I’ve been reading it I’m struck once again by the theme of narrating the work. Of the chapters I’ve read so far, three are especially vivid examples of that: Karl Fogel’s exegesis of the stream-oriented interface used in Subversion to convey changes across the network, Alberto Savoia’s meditation on the process of software testing, and Lincoln Stein’s sketches (“code stories”) that he writes for himself as he develops a new bioinformatics module.

Although this is a book by programmers and for programmers, the method of narrating the work process is, in principle, much more widely applicable. In practice, it’s something that’s especially easy and natural for programmers to do.

It’s easy because a programmer’s work product — in intermediate and final form — happens to be lines of text that can be printed in a book or published online.

It’s natural because programmers have been embedded for longer than most other professionals in a work process that’s fundamentally enabled by electronic publishing. We’ve been sharing code, and conversations about code, online for decades.

Most work processes don’t lend themselves to the sort of direct capture and literal representation that you see in Beautiful Code. Not yet, anyway. I think that can and will change, though, and I think two emerging forms of media will be powerful agents of change.

One of those forms is Internet video, which enables the capture and sharing of many kinds of physical-world expertise. The other is screencasting, which does the same for virtual-world expertise. Narration of work in these forms won’t be able to be printed in a book. But it will be just as valuable as the narration in Beautiful Code, and for the same reasons. Access to expert minds is just inherently valuable. We’re entering an era in which we’ll be able to access many more — and many different kinds of — expert minds. I’m looking forward to it. Meanwhile, I’m enjoying the access I have now to the 38 minds that Greg and Andy have collected for this book.

Behind the scenes: The editing of a screencast

While I was editing today’s screencast I kept a log of my edits, and I’ve included that log below. As is typical when I edit screencasts, this one squeezed down quite a lot: from almost 54 minutes to 34 minutes. The result not only saves the viewer a precious 20 minutes, but also unfolds in a far more entertaining and engaging way.

I’ve written a lot about why to do this kind of editing, but never shown in detail what the process is like. For folks who are familiar with the editing process — in any medium — this is all just basic knowledge and common sense. But there are lots of folks who are not familiar with the editing process in any medium. So to convey what it’s like, I decided to narrate (part of) the editing of this particular screencast.

As I’ve mentioned before, there’s one huge difference between editing audio and editing video. With audio, as with text, you can seamlessly cut and rearrange to your heart’s content. With video, the need to preserve visual continuity imposes severe limits, especially on the so-called internal edits that elide words and phrases. It’s interesting to note that, in this respect, the demo/interview genre of screencasting has more in common with audio than with video. There’s usually a lot less happening in a screencast than in motion video. So you can usually get away with the sort of heavy editing that’s normally only possible in the audio domain. And it’s very useful to be able to do that.

(Initial length: 53:45.)

I cut the first 2.5 mins of Henrik talking in general terms about CCR, DSS, the programming model. Why? Nothing to show, and this info is available elsewhere.

The real meat of this demo is to show how the Robotics Studio exposes a RESTful interface, and to demo interactions with (real and simulated) robots using that interface.

In the next segment, Henrik starts by saying “I have a nice big robot next to me, I might be able to show you, if I can just…”

I then cut 15 seconds of him fumbling around in the services directory and muttering to himself, while hunting for the webcam interface. So it went from:

“I might be able to show you, if I can just” …. 15 seconds of fumbling and muttering … “there you go! [image appears]”

to:

“I might be able to show you, if I can just … there you go! [image appears]”

This is partly about respect for the viewer’s time, because people have better things to do than watch and listen to 15 seconds of fumbling and muttering. And it’s partly about keeping the storyline moving forward in an engaging way.

A subtle point here is that I left in just enough of the fumbling and muttering. If I had reduced it to:

“I might just be able to show you…there you go!”

then it would have felt overproduced and inauthentic. I want Henrik to fumble and mutter a little bit, that’s part of the whole charm of the thing. But I want to limit the fumbling and muttering to a reasonable length. I think that leaving in “if I can just…” retains just enough of that quality — but not too much:

“I might be able to show you…if I can just…there you go! [image appears]”

During the next stretch I made no major cuts, but lots of little ones in the range of 2 to 5 seconds. These are places where the audio pauses because Henrik is thinking, or waiting for the computer to respond. And they are also places where he’s just verbally warming up to what he really wants to say — or where I am doing the same.

Example: “…and so, um…” –> “” == 2 seconds saved

Example: “And what we have here is that, um, and so, everybody has seen a web server” –> “Everybody has seen a web server” == 5 seconds saved

These internal cuts are completely inaudible and, so long as they don’t interrupt the onscreen action, also invisible. Since a typical screencast is often visually quiescent there are many opportunities to make these cuts. They not only reduce the end-to-end time, but also — just as important — they make the video far more watchable.

The next major cut was a 20-second setup leading to the statement: “In our model, everybody is a client and everybody is a server.” In the setup, Henrik talked about how typical web apps (like home banking) exhibit a more classical client/server architecture. It was a judgement call but, in this case, I decided that the kinds of folks who will care about RESTful interfaces to a robotic services fabric didn’t need the setup, and that it was more valuable to shave those 20 seconds than to keep them.

It’s worth noting how the context supported making this cut. Originally:

“We have services that talk to each other, that wire each other up, and use each other to construct and compose applications.”

… 20-second setup ….

“In our model, everybody is a client and everybody is a server.”

Finally:

“We have services that talk to each other, that wire each other up, and use each other to construct and compose applications. In our model, everybody is a client and everybody is a server.”

It flows perfectly.

Next I cut a restatement of “everybody is a client and a server” which chewed up 5 or 6 seconds without adding anything new. In doing so I ran into a logistical problem. When trying to make precise audio cuts in Camtasia you can run into trouble in tight spaces. (I keep meaning — and keep forgetting — to mitigate this problem by capturing at a higher frame rate than the one at which I finally produce.) A workaround is to silence a region that’s too small to accurately cut.

So, for example, after cutting that restatement I wound up with:

...at the same time same time. That has some great benefits.

---------

I wasn’t able to cut the redundant “same time” without affecting the “That has” — but I was able to replace the redundant “same time” with silence:

...at the same time. ________ That has some great benefits.

That left a perfectly natural-sounding 1-second pause.

(Length now: 49:38)

Through the next section I made assorted internal cuts, and one major cut. After Henrik contrasted OO-style inheritance with the additive composition of RESTful services which is the extension pattern for the Robotics Studio, we got into a several-minute discussion about the tradeoffs between these approaches. It wasn’t really conclusive, though, and I realized that it would be better to factor that out. In fact, while recording, I decided at this point to do a separate podcast in which we’ll drill down on these more abstract points. In a screencast, you want to keep the visuals moving along.

(Length now: 46:38)

For the remainder, more of the same: internal cuts, plus 20- or 30-second chunks that were disposable.

Final length: 34:30

Henrik Frystyk Nielsen on the RESTful architecture of Microsoft Robotics Studio

Henrik Frystyk Nielsen used to work for the World Wide Web Consortium on some key pieces of infrastructure including the HTTP specification and libwww. He left the W3C in 1999 and now works for Microsoft where his current project is Robotics Studio, whose tagline is: “A Windows-based environment for academic, hobbyist and commercial developers to easily create robotics applications across a wide variety of hardware.” What that description doesn’t tell you, but today’s screencast shows, is that the Robotics Studio is based on a RESTful architecture, and that applications are built by composing lightweight services in ways that will be instantly familiar to every web developer.

To drive home that point, much of the action in this screencast occurs in a web browser, where you’ll see Henrik explore a distributed directory of services and view XML snapshots of the current state of bumpers, cameras, and laser range finders.

From a read-only perspective it’s all HTTP GET, and you can do things like subscribe to robotic sensors using RSS feeds. When you control a robot, SOAP is used to optimize fine-grained updates. But either way it’s a loosely coupled and late bound system that leverages the fundamental flexibility of web architecture in a very different domain. In one compelling demonstration of that flexibility, you’ll see a generic controller — which had been controlling the robot in Henrik’s office with no prior knowledge of the device, purely by interface discovery — switch over to a simulated robot and drive it by means of the same kind of discovery.

Nobody goes swimming any more

|

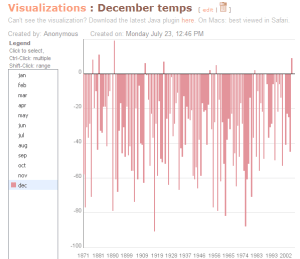

| Mean temperatures for December Concord, NH, 1871-2007 |

The weekend was beautiful here in New Hampshire but today we’re back to what has over the past five years started to seem like normal for July: cool and cloudy. I’ve been taking an informal survey this month, asking friends whether they’ve gone swimming yet this summer. Almost nobody has, which we all agree is just weird, and which we all tend to attribute to climate change.

For me, our recent pattern of cool and cloudy summers is becoming a deal-breaker. Reliably nice summers used to be the payoff for living here through the winter, but if I can’t count on summer to be reliably nice, I’m tempted to consider other options. That’d be a big decision though, so I’d like to support it with hard data.

Given all that’s been said and written about climate change, it turns out to be surprisingly hard to get hold of historical climate data. I had to look around quite a while before I found this FTP site where NOAA has parked files full of raw temperature and precipitation data.

The files cover the whole world and they go back a long way. Here’s one line from the mean temperature file:

4257260500201993 -37 -73 -2 85 143 190 219 208 164 88 45 -13

I’ve marked the 3-digit country code in red, the 5-digit World Meteorological Organization station number in blue, and the year in green. What follows are twelve values which are monthly mean temperatures in tenths of degrees Celsius.

Since Concord, NH is the closest WMO station to me — 55 miles away — I uploaded the Concord data for mean temperatures (1871-2007) and precipitation (1859-2007) to Many Eyes, and looked for a recent pattern.

I didn’t find one. Here’s a view of summer mean temperatures, and here’s a view of precipitation. Look for yourself.

On the temperature front, we’re all very aware that this past December was freakily warm. And as this view shows, there hasn’t been an above-zero-Celsius December since 1982. But there were more (and warmer) above-zero-Celsius Decembers before the midpoint of the time series — 1939 — than there have been since.

I am not saying that the planet isn’t warming or that the climate isn’t changing. But we ought to be able to explore the evidence for these phenomena, and review the interpretations of them, in much more interactive and collaborative ways. Not only for reasons of global policy, but also so we can contextualize what we see happening around us.

Arguing about the weather has undoubtedly been a favorite pastime of our species since we learned how to talk. Now we can have those arguments in the context of actual data. And as questions about climate change grow more critical, it’s imperative that we do. Hats off to Martin Wattenberg, Fernanda Viégas, and their colleagues for creating Many Eyes and showing how we can.

A conversation with Ken Banks about text-message-based networking in Africa

In a pair of articles from today’s New York Times, the world’s unequal distribution of Internet access refracts through two very different lenses. Paul Krugman’s subscriber-only column, The French Connections, highlights the sorry state of the United States relative to France, Japan, and other nations where broadband access is more widely distributed and much faster. But as Ron Nixon points out in Africa, Offline: Waiting for the Web, “less than 4 percent of Africa’s population is connected to the Web.”

That’s not likely to change anytime soon, according to Ken Banks whom I interviewed for my weekly ITConversations podcast. The network that matters in Africa is the pervasive cellphone network. (The US, of course, fails to lead in that realm too.) Leveraging the ubiquity of text messaging, Ken has created an entry-level SMS hub called FrontlineSMS — free to charities and non-profits — which automates various patterns of text-message-based communication. He’s recently been awarded a MacArthur grant to continue this work. Good going, Ken!

A conversation with John Shewchuk about BizTalk Services and the Internet Service Bus

In today’s installment of my Microsoft Conversations series I talked with John Shewchuk about BizTalk Services, a project to create what he likes to call an Internet Service Bus. The project’s blog, with pointers to key resources, is here. There’s also a Channel 9 video on this same topic, in which John Shewchuk and Dennis Pilarinos illustrate the concepts using a whiteboard and demos.

I began our conversation with a reference to a blog item posted by Clemens Vasters back in April when BizTalk Services was announced. He described a Slingbox-like application he’d done for his family.

It’s a custom-built (software/hardware) combo of two machines (one in Germany, one here in the US) that provide me and my family with full Windows Media Center embedded access to live and recorded TV along with electronic program guide data for 45+ German TV channels, Sports Pay-TV included.

Clemens did this the hard way, and it was really hard:

The work of getting the connectivity right (dynamic DNS, port mappings, firewall holes), dealing with the bandwidth constraints and shielding this against unwanted access were ridiculously complicated.

And he observed:

Using BizTalk Services would throw out a whole lot of complexity that I had to deal with myself, especially on the access control/identity and connectivity and discoverability fronts.

I began by asking John to describe how BizTalk services attacks these challenges in order to mitigate that complexity. We talked through a couple of scenarios in detail. The one you’ve heard the most about, if you’ve heard of this at all, is the cross-organization scenario in which a pair of organizations can very easily interconnect services — of any flavor, could be REST, could be WS-* — with reliable connectivity through NATs and firewalls, dynamic addressing, and declarative access control.

There’s another scenario that hasn’t been much discussed, but is equally fascinating to me: peer-to-peer. We haven’t heard that term a whole lot lately, but as the Windows Communication Foundation begins to spread to the installed base of PCs, and with the advent of a WCF-based service fabric such as BizTalk Services, I expect we’ll see the pendulum start to swing back.

At one point I asked John whether BizTalk Services supports the classic optimization — used by Skype and other P2P apps — in which endpoints, having used the fabric’s services to rendezvous with one another, are able to establish direct communication. He said that it does, and followed with this observation about the economics of hosting BizTalk Services.

When we host it, we’ll incur certain operational costs, so we’ll want to recover those costs. But our goal is not to differentiate our offering from others because we host the software, it should be the case that Microsoft competes on an equal basis with other hosters.

In many regards, our motivations differ from other providers. Take Amazon’s queueing service as an example. Because we’ve got software running both up in the cloud as well as on the edge nodes, we can create a network shortcut so that the two endpoints can talk directly. In that scenario, we don’t see any traffic up on our network. All we did was provide a simple name capability, so the two applications end up talking to each other, using their own network bandwidth. We can use the smarts in the clients and in our servers to reduce the overall operating cost.

Now in that scenario, the endpoints are presumably servers running within an organization, or maybe across organizations. But WCF-equipped clients can play in this sandbox too. The idea is, in effect, to generalize the capabilities of an application like Skype, and enable developers to build all sorts of applications that leverage that kind of fabric.

That’s a vision that many of us in the industry share. We’d just like to reduce the barriers to being able to connect our machines and our solutions. The industry’s seen a bit transition to a hosted world, because that’s been the easiest way to get universal connectivity. If a big organization with a whole bunch of high priests of IT were out there running the servers for you, then you didn’t have to get your machine to be able to do that.

But sometimes I might just want to put those things on my machine, and if it were easy enough, wouldn’t that be a great model? Why do I want to be beholden to some organization that’s capturing my data? Maybe I want to have more privacy.

Of course there are benefits to moving it out to the cloud, but we think that should be a decision you make after the fact. Build your application to a consistent abstraction, then decide where you want the dial as the demands on the application change. If I’m just trying to do a quick video share with my friends, why do I have to create a new space? Why not simply say, here’s the URL? And have that URL be stable?

Why not indeed. At its core the Internet has always been fundamentally peer-to-peer, but after a while we couldn’t sanely continue in that mode. Things got too scary, so we built walls and created ghettoes. Technically our PCs are still Internet hosts but, except when they’re running a few important P2P apps, they haven’t really been hosts for a long time. It’d be great to get back to that.

What’s easy, and what’s hard, about getting from Excel to a GeoRSS-enabled mashup

I’m making some progress in my quest to improve access to (and interpretation of) local public data. Yesterday’s meeting with the police department yielded a couple of spreadsheets — one with five-year historical data, and one with recent incident reports. The latter includes addresses which enabled me to plot the incidents in Virtual Earth.

It has been a while since I’ve done this, and the technology has matured. GeoRSS, in particular, seems to be a fairly new thing in the world. It’s a simple idea: use RSS (or Atom) to package sets of locations, encapsulating latitude and longitude coordinates in the GeoRSS namespace. Here’s the GeoRSS file I built from the police spreadsheet.

In poking around online in order to learn how to use GeoRSS, I ran across a familiar name: Jef Poskanzer. For many years I have been enlightened by Jef’s various experiments at acme.com. Way back in 1997, for example, I was using his implementation of Java servlets to explore that way of building online services. So I was delighted to see Jef’s name pop up again when I looked into GeoRSS.

On his GeoRSS page you can plug in the URL of a GeoRSS file and his service will map it for you in either Google Maps or Yahoo! Simple Maps. Nice! Jef’s page doesn’t include Virtual Earth as an option, but it also now supports GeoRSS so this was a good opportunity to try out that combination. It was, as you’d expect, quite easy to do. Given a well-formed GeoRSS file, all of the modern mapping APIs require very little of a developer who wants to spray the locations in the file onto a map.

But as I was reminded when going through this exercise, it requires a whole lot of work to transform a typical real-world document like the one I received yesterday — an Excel spreadsheet with manually-typed addresses — into a well-formed GeoRSS file. Data preparation is always the bottleneck.

Reflecting on how I got the job done, it’s amazing to consider the number and diversity of tools that I used. A partial list includes Excel for massaging and sorting data, Python for various bits of transfomational glue, and curl to pump addresses through an online geocoder.

I also leveraged a ton of tacit knowledge about the web, about XML, about regular expressions, and about the organization and display of data.

It’s always striking to me how we technical folk tend to focus on the endgame. “Look, ma, no hands! Just plug your [insert newest format] into your [insert newest tool] and it’s automatic!”

Here’s one small example of the difficulties we sweep under the rug. Consider a series of incidents at these addresses:

27 Damon Ct. 27 Damon Ct. 35 Castle St. 35 Castle St. 45 Damon Ct. 45 Damon Ct. 165 Castle St. 165 Castle St.

That’s how the address column will sort in a typical spreadsheet. But that’s not how you’d like to scan the legend in a mashup. To help visualize neighborhood patterns, you’d rather see something like:

Damon Ct. (27) Damon Ct. (27) Damon Ct. (45) Damon Ct. (45) Castle St. (35) Castle St. (35) Castle St. (165) Castle St. (165)

In reality it’s more complicated because I’ve omitted apartment numbers, dates, and annotations. The organization of these elements has a profound influence on which kinds of visualizations tend to come for free, and which will require a lot of extra work.

Now, because I’m handy with data, with text processing, and with regular expressions, I know how to reorganize the raw data. And because I have a view of the endgame, I know why to do it. But none of this is evident to a normal person sitting in an office compiling incident reports into an Excel log.

It seems, though, that we should now be able to normalize this kind of data entry in a way that would maximize the reuse value of the data. If I can feed random addresses into a geocoder and pretty reliably get back coordinates, I should also be able to feed unstructured addresses into some other online service and get back well-structured addresses. And I should be able to equip Excel to use that service to ensure that the structured addresses are logged with incidents. Is there a recipe for doing that?

Revisiting language evolution in del.icio.us

Recently I began keeping track of interesting public data sources using the del.icio.us tag judell/publicdata, and invited others to do the same using their own del.icio.us accounts. That method sets up an interesting pattern of collaboration whereby all contributions flow up to the global bucket, tag/publicdata, but individual contributors can curate subsets of that collection according to their own interests.

A nice example of that pattern emerged when the Many Eyes folks showed up at manyeyes/publicdata. Their contributions flowed up to the global bucket, and thence to the RSS feed I’m watching, which is how I got to find out about this excellent survey of a variety of public sources. It was done for a class at the University of Maryland, and it very helpfully characterizes data sources along a number of axes including searchability, browsability, interaction, and formats.

All this is quite straightforward and unsurprising to anyone who’s familiar with social bookmarking — which is to say, still quite unfamiliar to most people today.

So there’s not much chance that the next maneuver I’m going to describe will resonate in the general population, but I want to describe it anyway because those of us who think about these things ought to be thinking about how to make it more discoverable.

Several years ago, in a screencast entitled Language evolution in del.icio.us, I posited that tag vocabularies could evolve in the same way that natural languages do. In the realm of natural language, we coin new words all the time. When we hear a new word that we like, we adopt it — or, perhaps, adapt it. The punchline of the screencast was that this is how the grassroots semantic web will form. There are just two requirements: We need to be able to speak, and we need to be able to hear others speak.

Speaking, in the realm of tag vocabularies, means writing tags, and sometimes creating new ones. Hearing means reading tags, and observing how they’re applied to resources and by whom.

If you land on a page that you haven’t yet bookmarked, you can use the del.icio.us posting bookmarklet to show you (as recommended tags) which other tags have been assigned to that URL.

I tend to rely on a more sensitive organ of hearing: a bookmarklet that I call dc, for del.icio.us conversation. I use it all the time. Suppose, for example, I’d found that University of Maryland page through some other means of referral than del.icio.us. I’d have reflexively clicked the dc bookmarklet to produce this report which shows who else has bookmarked that page, and how it has been described.

In this case there’s not much to see. The URL was bookmarked once in Feb 07, by elzzup, to the tags data and class, and again in Jul 07, by manyeyes, to the tag publicdata.

This view is interesting for a couple of reasons that I don’t think are widely appreciated. First, it shows a progression from general ways of describing the resource to a more particular way. Note, by the way, that the proposed refinement of data to publicdata is not visible when you launch the bookmarking form, which recommends only class and publicdata. Note also that the introduction of publicdata is really a hack. It would arguably be better to rely on the individual tags public and data. But that would make it necessary to query for the conjunction, and that connection is too fragile. So publicdata also suggests something about how to form tags — that is, by making these conjunctions explicit.

Second, it shows who has proposed publicdata — namely, manyeyes, an identity that may be recognized, and that if recognized will add weight to the proposed usage of the tag.

These are subtle effects. For most people, they’re too subtle to matter at all. But I’m reminded that there’s important work yet to be done to render these effects in ways that make it easier for everyone to hear (and visualize) linguistic evolution in the tag domain, so that people can participate more actively and more naturally in that evolution.

A message for library catalog vendors

The LibraryLookup project is almost five years old, and people are still gradually discovering it, as I’m periodically reminded when I get a flurry of emails such as was provoked by this Lifehacker article. I think it’s time for this idea to graduate from the realm of hacks for adventurous people, and enter the realm of normal capabilities that everyone takes for granted.

For starters, if your system supports searching by ISBN, I suggest that you offer — in addition to whatever syntax you already use — one simple and standard pattern:

/search?isbn=1565925378

Next, use the OCLC’s xISBN service to expand the search to include all manifestations of the work indicated by the given ISBN.

Finally, have each instance of your system publish the bookmarklet made from these ingredients on its home page, along with instructions for using it.

For extra credit, enable patrons to indicate wish lists of books they’re interested in, and notify them when books on those lists become available.

People like this stuff when they discover it, but as yet not many have, and until it’s baked into your systems, most won’t.

A conversation with Ted Okada about the work of Microsoft Humanitarian Systems

The same audio glitch that ruined my interview with Joel Selanikio also affected another interview on the same day. That interview, for my Microsoft Conversations series, was with Ted Okada, who is the director of a small group called Microsoft Humanitarian Systems. So again I’ll have to settle for reporting highlights from the interview along with some quotes I was able to salvage.

Ted came to Microsoft by way of Groove, where he’d been hired to spearhead Groove’s use in the humanitarian sector which had increasingly come to value the product for several interesting properties — technical resilience in the face of intermittent connectivity, and political resilience in the sense that it creates neutral infrastructure owned by no single agency. When I caught up with Ted, as he was packing for a trip to Afghanistan, he gave this example of the latter:

We’ve been working with an NGO that was using Groove to negotiate between the Tamil Tigers and the Sinhalese government in Sri Lanka. The two parties wouldn’t sit in the same room, but they did agree to use Groove to arbitrate the conflict.

In this appearance on Channel 10, Ted talks about how Groove is uniquely well equipped to support collaboration in disaster relief situations, and he demonstrates a Groove-based solution that enabled five different relief organizations responding to the 2005 Kashmir earthquake to synchronize on the same operational picture.

Ted has also been one of Microsoft’s representatives at Strong Angel, an exercise to simulate disaster response that’s been held three times — in 2000, 2004, and most recently 2006. Strong Angel was the brainchild of U.S. Navy Medical Corps commander Dr. Eric Rasmussen. I asked Ted what it’s like to participate, and he replied:

It’s an odd mixture of the early Interop conferences — where people were trying to get routers from different manufacturers to work together — plus a little bit of Burning Man, a little bit of Foo Camp, and a little bit of the military channel. Officially it’s a demonstration, but it involves all those elements and addresses all kinds of questions. How do you cross the civil/military boundary, particularly when trust is low and the need for collective action is high? How do you make sure all the gear works together? Of course it’s also a venue for some interesting gear, like solar reflective yurts that you might find at Burning Man — and in fact actually were taken to Burning Man.

As John Markoff reported, there were some notable interoperability failures at Strong Angel 3 but also some notable successes. One of the latter involved the use of Simple Sharing Extensions (SSE), an extension of RSS, to synchronize location data between Google Earth and Microsoft Virtual Earth.

I wondered what broader role SSE might play, given that it extends a Groove-like data synchronization capability to a diverse set of applications. It turns out that Ted will be testing a prototype SSE adapter for Microsoft Access on a trip to Kabul next week:

From my perspective as a relief and development person for 20 years, you can’t overestimate the value of simple tools like good old Access. What if Access could relay messages and synchronize via SSE, so that you’ve got persistent statefulness and failover on highly intermittent and jittery networks? Suddenly Access becomes a much more lively player in the edge-based mesh. So now in Afghanistan we’ll actually be using this wonderful everyman’s tool, Access, enlivened with SSE adapters, to help out an NGO partner who’s told us that would really help them share data with the other stakeholders in the reconstruction project they’re working on.

Ted has an interesting take on what Microsoft might learn by collaborating with these kinds of partners:

If you make the developer part of an environment that is itself stressed, and build for the extreme case, maybe you can titrate lessons faster and close the loop quicker on accelerated learning. It’s hard to work in a place like Afghanistan. It’s an austere environment and you’re at the mercy of that environment. Very few people know who Microsoft is — or care who we are — and there aren’t many places in the world where that’s true. In some ways, perhaps, immersion in that environment could turn out to be the ultimate sort of extreme programming.

Those were the highlights. It’s painful to have bungled those audio recordings. When I told Phil Windley he said, “I live in fear of that.” Well, the silver lining — for folks who don’t listen to podcasts, at least — is that it forced me to write more about the interviews than I normally do. Tomorrow I’ll record what will be the third in a series of conversations about humanitarian uses of technology, and you can bet I’ll double-check to make sure I’m recording what I think I’m recording!

A conversation with Joel Selanikio about collecting public health data in developing countries

For this week’s ITConversations show I interviewed Dr. Joel Selanikio, co-founder of DataDyne, a non-profit consultancy dedicated to improving the quantity and quality of public health data. DataDyne’s principal tool is EpiSurveyor, a free and open source software product that simplifies the creation of forms for doing field data collection with handheld devices. There’s a Windows-based forms designer, and a runtime for Palm OS-based PDAs which is being ported to Windows Mobile- and Java-based devices.

It was a great interview but, when I opened up the audio file I’d recorded, I was horrified to find that an audio glitch had rendered it unusable. So instead I’ll report here on what Joel told me, and weave in some quotes I was able to salvage.

Our conversation was well-timed because I’d just watched the dustup between Michael Moore and Wolf Blitzer, checked out Moore’s rebuttal of Sanjay Gupta’s report on SiCKo, and tracked down some of the cited sources — including the United Nations Human Development Report. How reliable are these sources, particularly for developing countries? As you might imagine, and as Joel’s experiences confirm, there’s a lot of guessing going on:

It’s amazing how unaware people are of the tenuous nature of our knowledge of, really, anything. One of the things I ask people is: “What’s the population of the United States?” And they’ll say 290 million, or 300 million. But the real answer is: We don’t know. And we check, every 10 years, and we do a pretty good job of checking.

So if you want to know what’s the leading cause of disease in children in rural Africa, what’s the chance that you’ll have any idea what the answer to that question is?

I was a first responder to the tsunami in Southeast Asia. Imagine showing up in a place where the slate has been wiped clean, God just slammed his hand down and flattened everything. The roads are gone, and three or four thousand aid workers are all clustered in the few places they can get to. But of course, you have to come up with an estimate of how many people are dead. So somebody picks a number, and then you hear it on CNN that night. Fifty thousand, a hundred thousand, a hundred and twenty-five thousand, none of those estimates were based on any attempt to really find out.

A friend who works for American Red Cross asked me what I thought they could do. I said the most valuable thing to do with the hundreds of millions of dollars of donations they’d received was to invest in data collection. Normally in a situation like that you do a sample. You go to ten percent of households, and try to extrapolate, and hope that your sample isn’t biased. But I said, with five hundred million dollars, there’s no reason we can’t get the local people to do a census. Go to every refugee area and every household and actually find out — not estimates — but actual numbers, which would be of huge importance for reconstruction.

So of course it didn’t happen.

As a one-time database programmer who went to medical school and then became an epidemiologist, Joel’s acutely aware of the relationship between information technology and epidemiology, a discipline that is, as he reflects on here, profoundly data-driven:

In about 1995, over the course of six weeks, kids start showing up at the university hospital in Port-au-Prince, Haiti. They had different symptoms, but they all died. At about the hundred mark the local docs contacted the World Health Organization who contacted CDC, and a colleague of mine and I went down to Haiti. It’s a high-pressure situation, kids are dying every day. When we got there we began creating a database of responses to questions: what their symptoms had been, what medications they had taken. Within a few days it became apparent that all of the kids who’d died had taken one of several locally-produced Tylenol-like medications. Once we discovered that, they made an announcement and the outbreak ended.

People would ask me, “What magic did you work?” Well, in clinical medicine, the way that we understand things is — if it’s a rash, I look at the rash, I think about it, I look stuff up, but I don’t systematically create a database. For one patient you can juggle the variables in your head. But when you have a population of affected people, you need to collect data and analyze it. That’s the basis of epidemiology.

Unfortunately our standards and methods for data collection are far lower in the realm of public health than in the realm of business:

Imagine if you were the CEO of Toyota, and your CFO said, well, sales are pretty good, we think, but we’re not sure. He’d flip. And yet that’s how things are with public health. We’ve been making a concerted effort for fifty years to get rid of malaria, but the quality of our statistics is terrible, and we’ve just gotten used to that.

A key reason for that poor quality is that the collection of public health data in developing countries is still mostly a paper-based activity. And while handheld devices are obviously a great alternative, what Joel found is that the software available for creating surveys was way too hard for ordinary folks to use:

If you’re the ministry of health in Kenya and you want to survey a hundred thousand households, and use handhelds to do it, you’ll need some knowledge of programming. If I told you to write down your questions in Microsoft Word you could easily do it, it’s frictionless, but with the commercially available software for creating surveys that run on PDAs, you can’t do it. That software can do all kinds of fancy things, of course, but most of the time the information you need to collect is very simple stuff.

So that’s what EpiSurveyor does, Joels says. It makes the simple stuff simple, so that ordinary folks in developing countries can create surveys without having to hire programmers and consultants.

But how can you have any assurance that the data gathered in these kinds of surveys will be usefully comparable? Are there standard forms and standard schemas? Not really, Joel says. The existing forms are hard to find and reuse, and there’s been little progress toward standardization:

If you went to a UN organization and said, we want to standardize how we collect data about child nutrition, the response would be, let’s have a conference. We’ll have experts get together in Rome, and then in Paris, and decide what are the key questions for any standard child nutrition survey. But it’s hard to achieve unanimity, and there’s a built-in incentive not to because every time you get together it’s a trip to Rome.

Coming at the problem from a grassroots web 2.0 persective, Joel’ working to translate the various forms used by international agencies into EpiSurveyor’s XML format, and to make them available in a shareable repository. The notion is that reuse will occur naturally when it lies along the path of least resistance. And he sees that starting to happen. For example, having trained field workers in both Kenya and Zambia, he discovered — after the fact — that the Zambian workers had found, and reused, a Kenyan survey which they’d found on DataDyne’s private project management site.

My Zambian contact said, Joel, I hope this is OK, but I downloaded their form, and opened it up, and made a few changes — basically just the names of provinces — and then I used their form.

Of course it was more than OK, he was delighted. Asking the same questions, in the same ways, is exactly what you want to happen, and yet it rarely does.

The forms repository that Joel envisions doesn’t yet exist, but he’s hoping that as DataDyne builds up a reputation around successful deployments of EpiSurveyor, the company will be able to attract the resources and the attention needed to make that happen.

New expectations (and new opportunities) for stewards of public data

Here’s an update for those who’ve been following the story of my quest for local crime statistics[1,2]. This morning I met with the police chief and some other officials. Given that I began asking for this data in late April or early May, and went through four rounds of telephone and then email contact, it shouldn’t have taken so long to convene the meeting. And it would have taken longer had I not engaged my friend Ted Parent, who is a lawyer and a great champion of democracy, to write a letter to the city attorney. The magic incantation in New Hampshire, by the way, is not Freedom of Information Act (FOIA), but rather Right to Know. It wasn’t enough for me to utter those magic words, though. Ted had to do that, in a letter that went on to describe in great detail my reputation, qualifications, and seriousness of purpose. That description is true, but shouldn’t have been necessary, nor should Ted’s services have been.

In any event, we had a productive discussion and will meet again soon to discuss logistics: what’s unavailable and why, what’s available and how to get it. What will likely be available is an update to this data set, which might or might not reveal trends since 2005. That would be of interest locally because, while there’s a strong sense that crime is worse lately, nobody seems to be clear about the details.

But here’s why that might not help. The feds only gather and report on certain categories of crime. Among those not included, the chief told me, are drunk driving incidents, which he’s been seeing a lot more of lately. Another systematic omission: rapes only count as rapes when inflicted on females by males.

Then there’s the fact that state participation in the National Incident-Based Reporting System (NIBRS) — which is apparently the new name for what used to be called UCR (Uniform Crime Reporting) — is voluntary and spotty.

So it’s unclear what questions can even be answered — in local, state, or national context — by the UCR/NIBRS data that the city’s software can and does report to the feds.

But other questions are entirely outside the scope of that dataset. It includes no location information, for example. I was surprised to learn that while the city does of course collect street addresses when entering crime reports into its database, they’re unaware of any straightforward way to get the location data back out in order to visualize geographic patterns. My hunch is that I can help them with that, if I can get hold of a raw export, so that’s something we’re going to explore at our next meeting.

This has been an interesting process to observe. Today the assistant city attorney said something that crystallized, for me, an insight about the stewardship of public data. Although the city has so far received very few Right to Know requests, one of them, she said, could have proved very costly in terms of the software and consulting services that would have been needed in order to comply. That insight won’t rewrite the legacy system, but it certainly imposes an important new requirement on its successor.

The folks I met with today aren’t familiar with ChicagoCrime.org or CAPStat, but I didn’t get the impression they’re opposed to the idea of citizen participation in the interpretation of government data. On the contrary, I think they may conclude that deploying systems to enable that participation would be as useful to them as it would be to the public.

We have a long way to go at all levels: local, state, national, international. But expectations are being reset, up and down the line, and I’m hopeful that we’ll get where we need to go.

Data analysis as performance art

Hans Rosling has been justly acclaimed for a couple of TED talks on global health in which he makes mesmerizing use of his (and now Google’s) GapMinder software, which he uses to tell compelling stories with data. The software is very cool, but what really makes the stories come to life is Rosling’s narrative. Data analysis, for him, is a performance art.

I’ve been thinking about this because I’ve been trying to investigate a perceived crime wave in my home town. You’d think it would be straightforward to get hold of the data but, after four months, I’m still trying. Meanwhile, however, I found some historical data at the Bureau of Justice, and I decided to see what I could make of that.

The visualizations shown in today’s screencast were done with Many Eyes, which is another very cool piece of software. But what I realized while making them is that narrated animation is really the secret sauce. Analytical software, whether it’s Excel or GapMinder or Many Eyes or something else, is necessary but not sufficient. The stories that people will understand, and remember, are the ones that have been performed well.

Now I’m no Hans Rosling, and you certainly won’t see me swallow a sword at the end of this screencast — as he amazingly does at the end of this video. But I will be trying to emulate his example when I tell stories with data. And I’m struck, once again, by the way in which screencasting can bring software interaction to life.

The charts used in my screencast could have been made in Excel or in any other charting package. By making them in Many Eyes, I added the important new dimension of social analysis. So you can visit the data sets there, comment on the visualizations, and add your own visualizations. But data analysis as performance art goes beyond the snapshots produced by analytical tools. It lives in the interstitial spaces between the snapshots, traces a narrative arc, shows as it tells.

A conversation with Timo Hannay about the scientific web

As director of web publishing for Nature Publishing Group, Timo Hannay’s projects include: Connotea, a social bookmarking service for scientists; Nature Network, a social network for scientists; and Nature Precedings, a site where researchers can share and discuss work prior to publication.

The social and collaborative aspects of these systems are, of course, inspired by their more general counterparts on the web: del.icio.us, Facebook and LinkedIn, the blogosophere. That’s part of what we discussed in this week’s ITConversations podcast. We also talked about my longstanding concern that scientists, like other academics and indeed most professional people, aren’t directly rewarded for being wired into the web. Timo has some great ideas about how to change that. He notes:

This will sound a bit strange coming from someone who works for a journal publisher, but to date, the way that scientists’ output has been measured has been unduly focused on publications in peer-reviewed journals. That is, and will continue to be, a really important part of it, but it’s not the only thing they do.

Here’s one specific proposal for change — measure, and reward, contributions of data:

Biology in recent years has seen a move from what I would characterize as cottage industry science, where everything from data capture through to analysis to writing the paper happens within one lab among a small group of people, to a much more industrial scale where you have different groups, widely dispersed, perhaps who don’t even know each other, doing the data capture versus the analysis versus writing the paper.

But you can’t just publish a data set. So what tends to happen is that, for a really big important data set — like a new major genome — they’ll publish a paper off the back of it, and do a very quick preliminary analysis. But the real news is not the analysis, it’s the data set. They have to make this fig leaf of analysis in order to justify publishing the paper.

We need to make it possible for people to publish data sets — to put them out there, track what use is made of them by other people, and then eventually gain credit for that.

Excellent suggestion!

More broadly, Timo wants to measure activity in the specialized versions of the blogging, bookmarking, and social networking services that Nature Publishing Grouop is creating for scientists. He says NPG is working with funding organizations to figure out what kinds of measurement can support a broader system of credit and recognition.I know it’s hard to nail down this touchy-feely stuff, but it really does matter. Yesterday I found a great quote from E.O. Wilson — in Consilience, which I’ve finally gotten around to reading — that helps explain why:

The creative process is an opaque mix. Perhaps only openly confessional memoirs, still rare to nonexistent, might disclose how scientists actually find their way to a publishable conclusion. In one sense scientific articles are deliberately misleading. Just as a novel is better than the novelist, a scientific report is better than the scientist, having been stripped of all the confusions and ignoble thought that led to its composition. Yet such voluminous and incomprehensible chaff, soon to be forgotten, contains most of the secrets of scientific success.

Narrating the work in openly confessional memoirs can and should be measurable, valuable, credit-worthy.

Show me the data

The emerging discipline of social data analysis and visualization faces two challenges. First, obviously, you need data. Then, more interestingly, you need to figure out ways for people to create, share, and collaboratively refine interpretations of the data. There are a handful of well-known and powerful sources of data. The OECD’s data, for example, drives several of the visualizations at IBM’s Many Eyes site. Where else can you find data for these kinds of tools and services to chew on?

Sources I’ve used and discussed include Washington DC’s CAPStat and the Dartmouth Atlas of Health Care. A number of others are listed in this summary from the session at Foo Camp 07 on liberating government data.

For my own purposes, I’ve decided to keep track of these kinds of public data sources at del.icio.us/judell/publicdata. One of the delightful consequences of doing things that way is that I can pop up a level, to del.icio.us/tag/publicdata, in order to find out what other folks have been storing in the publicdata bucket.

There’s not a whole lot there, yet, but here’s one gem I discovered by way of a link to Gapminder: the United Nations Common Database. From the Gapminder blog on June 7:

UN statistics finally liberated and free of charge!

In a bold move that hopefully will set the standard for all major producers of statistics, UN Statistical Division have made their data accessible and FREE OF CHARGE from May 1 this year. United Nations Common Database (UNCDB) is now available for everyone, with no demand of subscription or user fees on their web-site.

We now look forward to the domino-effect and the liberation of other hidden or locked global statistics from other producers and collectors of data.