One of the first posts I wrote with Radio UserLand, my original blogging tool, was this one from early 2002:

Messages addressed to spaces

I just realized why the idea of messages addressed to spaces hit me so hard. Email is a message addressed to a person or group, where as blogging (or posting to a newsgroup or web forum) is a message addressed to a space. A group may or may not form at the coordinates of that space. If a group does form, people may be there at roughly the same time, or may visit serially, separated by hours or days or even years. Why does this seem so special and important to me? I’m still not completely sure, I only know that it does.

Remarkably that blog post still survives at radio-weblogs.com. The New Scientist story it cited, though, was a casualty of a content management regime change. But I remember the story. It described a scenario that was futuristic then. You’re walking around in New York City with a handheld device that knows your location; you can send messages tagged with your present coordinates; you can also receive messages tagged with your present coordinates. The physical world becomes a bulletin board carved up into an infinite number of topics. Your presence in any particular place connects you to the corresponding topic. You can read messages posted to that topic by anyone who’s been there before. If you send a message to the topic, it joins the others there and becomes available to anyone who visits in the future. Of course you don’t have to be physically present to read all the messages posted to a topic. You could look up a topic by its coordinates, and read the topic’s messages from anywhere at any time.

This was a beautiful example of one of the seven ways to think like the web:

6. Participate in pub/sub networks as both a publisher and a subscriber

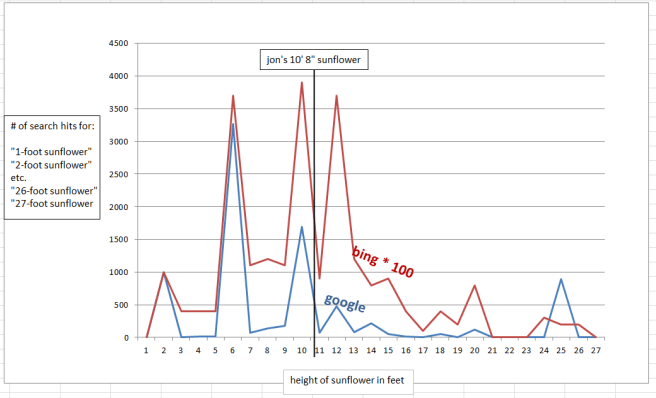

What the New Scientist described in 2002 may have been an early incarnation of Dodgeball, which begat Foursquare: a pub/sub network organized around a dynamic set of topics. Anyone can create a topic, post messages to a topic, and read messages posted to a topic. The game itself holds no attraction for me. I have never claimed to be the mayor of a bar or an ice cream shop, and I never will. But the pub/sub principle that Foursquare embodies is profoundly important. Wikipedia says there were six million registered Foursquare users in December 2010. I recently registered myself and was given a number just north of 8 million. I’m happy to know that something like that many people have experienced Foursquare’s mode of pub/sub networking. My hope is that as more folks see the underlying communication pattern through the lens of Foursquare, as well as through the lenses of blogging, Twitter, Facebook, and other services, more will grasp the essence of the pattern and find other ways to apply it.

High on the list of other possible ways, for me, is internal corporate communication. In many companies large and small, the dominant paradigm, interpersonal messaging, fails. Sue, who is assigned to Project A, which is hosted on Site X, reaches a milestone and makes a public release. Frank, also assigned to Project A, writes public blog post P in support of the release. Time passes. Sue leaves project A, Roger joins. Also Frank leaves, and Tom joins. Roger rehosts Project A on Site Y, and makes a new release that invalidates Tom’s blog post. How can Tom (who inherited the blog from Frank) know that he needs to update it based on new work by Roger (who inherited Project A from Sue)?

He probably can’t. To understand why not, let’s start with this diagram:

The solid blue arrows are messages that flow among the four human players in this drama: Sue, Roger, Frank, and Tom. Those messages refer to four non-human players, which I’ll call topics: Project A, Blog Post P, Site X, Site Y. The next diagram shows some of those references as dotted black arrows:

Any message can mention one or more topics. If I showed all the possible references the diagram would be very cluttered, so I won’t, but just imagine black dotted lines from every message path to every topic. Do you see what’s still missing? Two critical things:

1. There are no links connecting people to topics.

2. There are no links connecting topics to other topics.

Now let’s redraw the diagram for an environment where there isn’t just person-to-person messaging, but also person-to-topic, topic-to-person, and topic-to-topic. At this point Sue has reached her Project A milestone, made a public release to Site X, and notified Frank, who has written it up as Blog Post P.

In the previous diagram I said the dotted black arrows were references. When Sue wrote to Frank to notify him that she’d reached the Project A milestone, she mentioned the name, Project A, in the subject of her email, or in the body, or both. She also mentioned the name Site X, and its associated URL. When Frank wrote back to Sue to show her Blog Post P, he mentioned its name and associated URL.

In this new diagram, though, I mean something different by the black dotted arrows. Now they are message paths. When Sue reached her project A milestone, she sent a message to a person, Frank, alerting him to that fact. That same message also went to a topic, Project A. Frank could then subscribe to the Project A topic, and find out about things that Sue, or others connected to Project A, knew but didn’t bother to tell him.

If there’s little message traffic flowing through the Project A topic, Frank won’t mind watching it. If the topic gets noisy, he can unsubscribe. But the topic still exists; people who know about it can read its messages; people who don’t know about it can find the topic and its messages by searching. It should go without saying, but this is crucial: topics must be discoverable and searchable.

Frank, meanwhile, by creating Blog Post P, has also created a new topic. Part of what happens next is already common. It’s a blog, therefore it publishes a feed to which any interested party can subscribe. Less common but still not unheard of, in the current state of play, is for the blog post to not only publish feeds (e.g., a feed of blog posts, also per-post feeds of comments) but also subscribe to feeds. So Frank might configure Blog Post P to receive a feed from Project A. When Roger reaches the next Project A milestone, he — or, preferably, his deployment software — posts a message to that effect to the Project A topic. Blog Post P, as a subscriber to the topic, will receive and can optionally republish the message.

Sue, meanwhile, by hosting her Project A release on Site X, has created (or joined) the Site X topic. When she posts the release to Site X, she links it to Project A. The link, in this case, is bidirectional. When Project A reaches a milestone, Site X is notified. And when Site X schedules downtime for an upgrade, Project A is notified.

Now let’s wind the clock forward. Frank and Sue have both left Project A. Frank no longer cares about Blog Post P, and Sue no longer cares about Project A. Roger has inherited Sue’s development role, and Tom has inherited Roger’s public relations role. At this point, however, Roger hasn’t yet reached the next milestone, and so there’s been no need for Tom to revisit Blog Post P. Here’s the picture:

This is what can’t happen in organizations that rely solely on interpersonal messaging. The system retains traces of the connections among Project A, Site X, and Blog Post P. When Roger takes over for Sue, he starts sending messages to Project A, which in turn notifies Blog Post P and Site X. When Roger moves Project A from Site X to Site Y, he redirects that link for all subscribers to the Project A topic. When Roger reaches the next Project A milestone, his message to that effect reaches anyone now subscribed to Site Y. It also reaches Tom, who subscribed to Project A when he took over from Frank.

In theory everyone talks to everyone and everything gets taken care of. In practice, as we know, not so much. Interpersonal messaging alone can’t create a resilient and discoverable web of connections. That’s why interpersonal messaging must be embedded in a pub/sub network where messages flow person-to-person, person-to-topic, topic-to-person, and topic-to-topic.

There isn’t, and never will be, a single product or service that implements this alternative architecture of communication. Many aspects of it are already available to us. You can do a lot of this stuff with internal blogging and social bookmarking, or with an email system, or with a combination of these modes, if people are thoughtful about naming conventions and if the topic archives are discoverable and searchable. But the model is abstract. To make it concrete we’ll need systems that help us use all of our existing communication tools — and all the new ones in the pipeline — to enact this pattern of communication.